- Notebooks Create Documents Organize Files Manage Tasks 1 3 1/4

- Notebooks Create Documents Organize Files Manage Tasks 1 3 13

- Notebooks Create Documents Organize Files Manage Tasks 1 3 12

- Notebooks Create Documents Organize Files Manage Tasks 1 3 11

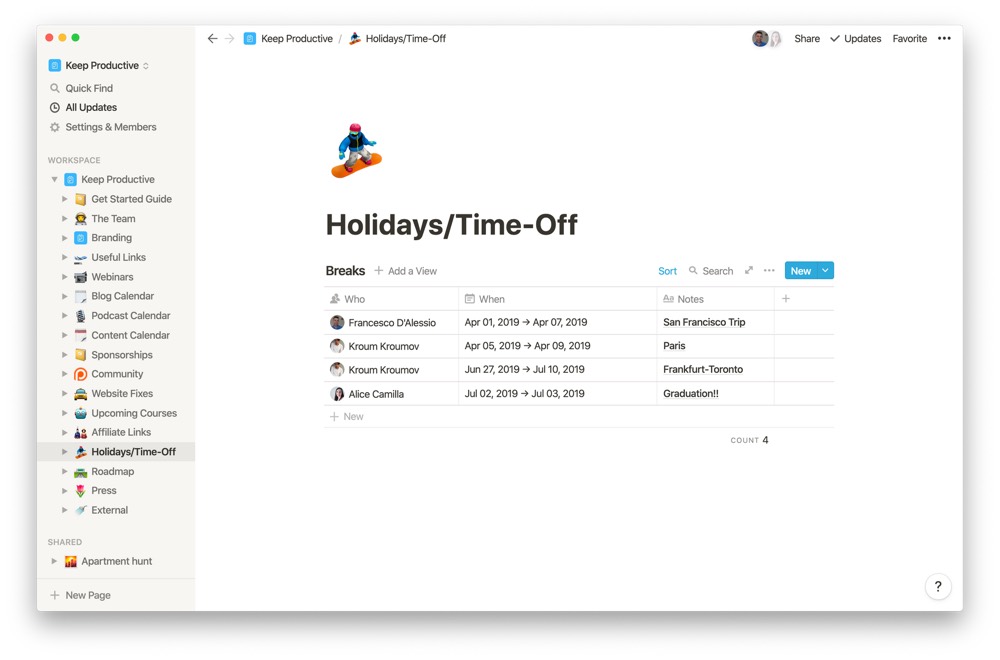

Organizing your papers in a few small binders makes it easier to tote one or two around with you to appointments. Try storing them upright and organized on the counter with the help of a dish. You can either create a blank notebook from scratch or copy content from an existing Class Notebook. Use Class Notebook in a channel. Each time you create a new channel in a class team, a new section is automatically created in the Class Notebook’s Collaboration Space. All students and teachers can edit and work on OneNote pages within a channel.

With small business offices, there are usually one or two people who know how the office files are organized. Which results in those people being interrupted several times throughout the day with questions on where paper files are in the archives. And when that person who knows the organize office files information leaves or they work part-time, there can be major upset and upheaval with office productivity.

Ask yourself these questions: Will the new part-timers know where everything is? What if there is an emergency and you need to hire a temp, will it be easy for that person to know where all the items are in the office? Will the other people who occasionally use the files and papers know where important documents are stored or will they not be able to work in that environment? These questions should make you think about what needs to be done in an office so that everyone can access the important papers without needing assistance.

Below are tips to organize office files so anyone in the office will know where they go:

Tip 1: Draw out a floor plan (or Office Map) and including all the filing cabinets that you use to file papers. It doesn’t have to look like an architect designed it. It just needs to include all the spaces files are stored. Use squares and rectangles for filing cabinets.

After you draw out the floor plan of the office, make extra copies. Art text 3 2 64gb. These copies are helpful for other things that are stored in the files.

On the first office map, start to label the filing cabinets drawing with statements similar to the ones below.

- File payroll paperwork here.

- File payroll reports/confirmation sheets of payment here.

- Here is where I put expenses to be invoiced.

- Outstanding invoices filed here.

- Place the bills to pay here.

- When you need to pay your bills, you will find them here.

- File the paid bills here.

- Register the contracts from clients here under the customer’s name.

- File client folders here.

- File conference paperwork here.

- Here is where I put my to-do files.

- Here is where I put my current projects.

Notebooks Create Documents Organize Files Manage Tasks 1 3 1/4

How to share where you filed papers in a filing cabinet?

On another duplicate floor plan, mark the following areas and indicate what are in these zones.

Then, staple them together with the original floor plan:

- Shipping – Include a statement like this is where the stamps, envelopes, and return labels are located.

- Snack – Include statements like: this is where the supplies for the coffee maker are stored. This square is where the paper products are stored.

- Inventory – Include this area if you carry an inventory in your office. This area should have its own extensive (detailed) list of items.

- Office Supplies – List all the items located in this area. You can say something like “this is where the pens are stored, this is where the paper for the printer is stored, etc.”

- Conference Room area supplies – This location could include “cleaning supplies for the table in located in this cabinet”

After you complete the office maps, pretend that you are new to the office and need something. Follow the map and see if you can find the right area of supplies you need. If not, revise your wording on the map. And, then have someone else do the same thing and see how that works for them as well.

Tip 2: Make procedure lists as thorough as possible. List every task in your office as well as, where to file the paper when they are through, and review it at least once a year to make sure it is updated. This will help transition new employees more smoothly.

How to make procedure lists?

Need more information on how to make a procedure list or checklist, visit this post: How to create checklist or procedure lists to improve productivity in your business

Tip 3: Make sure your filing cabinets have a system. Label the hanging folder with broad topics and label the folders with more specific topics. A no-brainer, right? Not really. Many people fail to know the difference between specific and broad topics. Before creating the system, go through and write-up a list of the topics you think you want to use on a piece of paper and then review it with your real files. Then, pretend you are a new person and you know nothing about the system. Review the system, does it work? If it doesn’t work, modify the areas you are missing steps and then implement the entire system in your filing cabinets.

Visit these posts for more information on how to make efficient filing systems.

By doing these three steps, it will help you, and your staff (temporary or not) work more productively in the office. Keep these items in a safe place and give a copy to everyone that may need them, like your office manager, your spouse, and employees.

What system works for you? Please share below.

Feel free to visit these links below from other experts regarding how to make a functional filing cabinet.

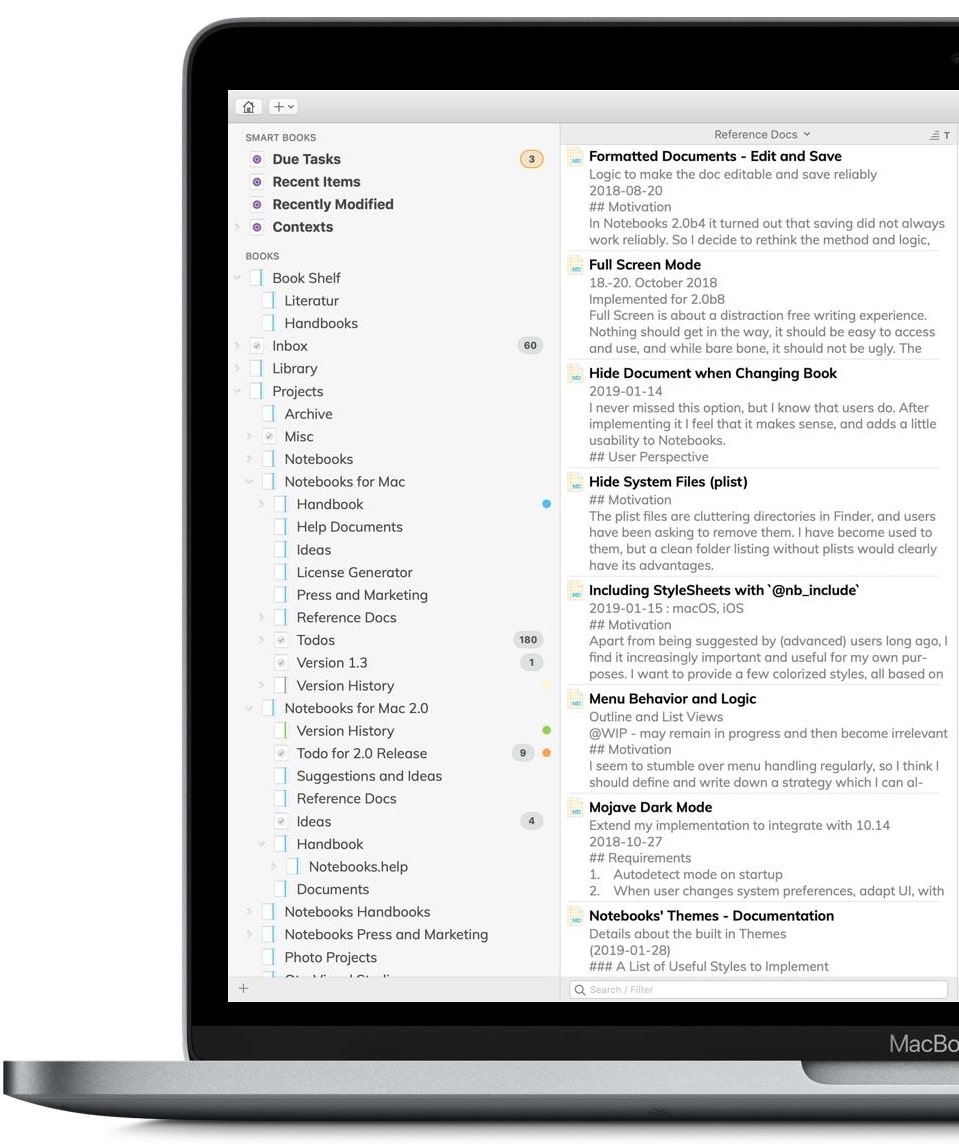

You can manage notebooks using the UI, the CLI, and by invoking the Workspace API. This article focuses on performing notebook tasks using the UI. For the other methods, see Databricks CLI and Workspace API.

Create a notebook

- Click the Workspace button or the Home button in the sidebar. Do one of the following:

- Next to any folder, click the on the right side of the text and select Create > Notebook.

- In the Workspace or a user folder, click and select Create > Notebook.

- In the Create Notebook dialog, enter a name and select the notebook’s default language.

- If there are running clusters, the Cluster drop-down displays. Select the cluster you want to attach Omnifocus pro 2 12 4. the notebook to.

- Click Create.

Open a notebook

In your workspace, click a . The notebook path displays when you hover over the notebook title.

Delete a notebook

See Folders and Workspace object operations for information about how to access the workspace menu and delete notebooks or other items in the Workspace.

Copy notebook path

To copy a notebook file path without opening the notebook, right-click the notebook name or click the to the right of the notebook name and select Copy File Path.

Rename a notebook

To change the title of an open notebook, click the title and edit inline or click File > Rename.

Control access to a notebook

If your Databricks account has the Premium plan (or, for customers who subscribed to Databricks before March 3, 2020, the Operational Security package), you can use Workspace access control to control who has access to a notebook.

Notebook external formats

Databricks supports several notebook external formats:

- Source file: A file containing only source code statements with the extension

.scala,.py,.sql, or.r. - HTML: A Databricks notebook with the extension

.html. - DBC archive: A Databricks archive.

- IPython notebook: A Jupyter notebook with the extension

.ipynb. - RMarkdown: An R Markdown document with the extension

.Rmd.

In this section:

You can import an external notebook from a URL or a file.

- Click the Workspace button or the Home button in the sidebar. Do one of the following:

- Next to any folder, click the on the right side of the text and select Import.

- In the Workspace or a user folder, click and select Import.

- Specify the URL or browse to a file containing a supported external format.

- Click Import.

In the notebook toolbar, select File > Export and a format.

Note

When you export a notebook as HTML, IPython notebook, or archive (DBC), and you have not cleared the results, the results of running the notebook are included.

If you’re using Community Edition, you can publish a notebook so that you can share a URL path to the notebook. Subsequent publish actions update the notebook at that URL.

Notebooks Create Documents Organize Files Manage Tasks 1 3 13

Notebooks and clusters

Before you can do any work in a notebook, you must first attach the notebook to a cluster. This section describes how to attach and detach notebooks to and from clusters and what happens behind the scenes when you perform these actions.

In this section:

When you attach a notebook to a cluster, Databricks creates an execution context. An execution context contains the state for a REPL environment for each supported programming language: Python, R, Scala, and SQL. When you run a cell in a notebook, the command is dispatched to the appropriate language REPL environment and run.

You can also use the REST 1.2 API to create an execution context and send a command to run in the execution context. Similarly, the command is dispatched to the language REPL environment and run.

A cluster has a maximum number of execution contexts (145). Once the number of execution contexts has reached this threshold, you cannot attach a notebook to the cluster or create a new execution context.

Idle execution contexts

An execution context is considered idle when the last completed execution occurred past a set idle threshold. Last completed execution is the last time the notebook completed execution of commands. The idle threshold is the amount of time that must pass between the last completed execution and any attempt to automatically detach the notebook. The default idle threshold is 24 hours.

When a cluster has reached the maximum context limit, Databricks removes (evicts) idle execution contexts (starting with the least recently used) as needed. Even when a context is removed, the notebook using the context is still attached to the cluster and appears in the cluster’s notebook list. Streaming notebooks are considered actively running, and their context is never evicted until their execution has been stopped. If an idle context is evicted, the UI displays a message indicating that the notebook using the context was detached due to being idle.

If you attempt to attach a notebook to cluster that has maximum number of execution contexts and there are no idle contexts (or if auto-eviction is disabled), the UI displays a message saying that the current maximum execution contexts threshold has been reached and the notebook will remain in the detached state.

If you fork a process, an idle execution context is still considered idle once execution of the request that forked the process returns. Forking separate processes is not recommended with Spark.

Configure context auto-eviction

You can configure context auto-eviction by setting the Spark property

spark.databricks.chauffeur.enableIdleContextTracking.- In Databricks 5.0 and above, auto-eviction is enabled by default. You disable auto-eviction for a cluster by setting

spark.databricks.chauffeur.enableIdleContextTrackingfalse. - In Databricks 4.3, auto-eviction is disabled by default. You enable auto-eviction for a cluster by setting

spark.databricks.chauffeur.enableIdleContextTrackingtrue.

To attach a notebook to a cluster:

- In the notebook toolbar, click Detached .

- From the drop-down, select a cluster.

Important

An attached notebook has the following Apache Spark variables defined.

| Class | Variable Name |

|---|---|

SparkContext | sc |

SQLContext/HiveContext | sqlContext |

SparkSession (Spark 2.x) | spark |

Do not create a

SparkSession, SparkContext, or SQLContext. Doing so will lead to inconsistent behavior.Determine Spark and Databricks Runtime version

To determine the Spark version of the cluster your notebook is attached to, run:

To determine the Databricks Runtime version of the cluster your notebook is attached to, run:

Notebooks Create Documents Organize Files Manage Tasks 1 3 12

Scala

Python

Note

Both this

sparkVersion tag and the spark_version property required by the endpoints in the Clusters API and Jobs API refer to the Databricks Runtime version, not the Spark version.- In the notebook toolbar, click Attached <cluster-name> .

- Select Detach.

You can also detach notebooks from a cluster using the Notebooks tab on the cluster details page.

When you detach a notebook from a cluster, the execution context is removed and all computed variable values are cleared from the notebook.

Tip

Databricks recommends that you detach unused notebooks from a cluster. This frees up memory space on the driver.

The Notebooks tab on the cluster details page displays all of the notebooks that are attached to a cluster. The tab also displays the status of each attached notebook, along with the last time a command was run from the notebook.

Schedule a notebook

Notebooks Create Documents Organize Files Manage Tasks 1 3 11

Diskkeeper pro: advanced cleaner & uninstaller 1 4 12. To schedule a notebook job to run periodically:

- In the notebook toolbar, click the button at the top right.

- Click + New.

- Choose the schedule.

- Click OK.

Distribute notebooks

To allow you to easily distribute Databricks notebooks, Databricks supports the Databricks archive, which is a package that can contain a folder of notebooks or a single notebook. A Databricks archive is a JAR file with extra metadata and has the extension

.dbc. The notebooks contained in the archive are in a Databricks internal format.Import an archive

- Click or to the right of a folder or notebook and select Import.

- Choose File or URL.

- Go to or drop a Databricks archive in the dropzone.

- Click Import. The archive is imported into Databricks. If the archive contains a folder, Databricks recreates that folder.

Export an archive

Click or to the right of a folder or notebook and select Export > DBC Archive. Databricks downloads a file named

<[folder|notebook]-name>.dbc.